PRODUCTS

Image: Detect harmful visual content automatically

detection

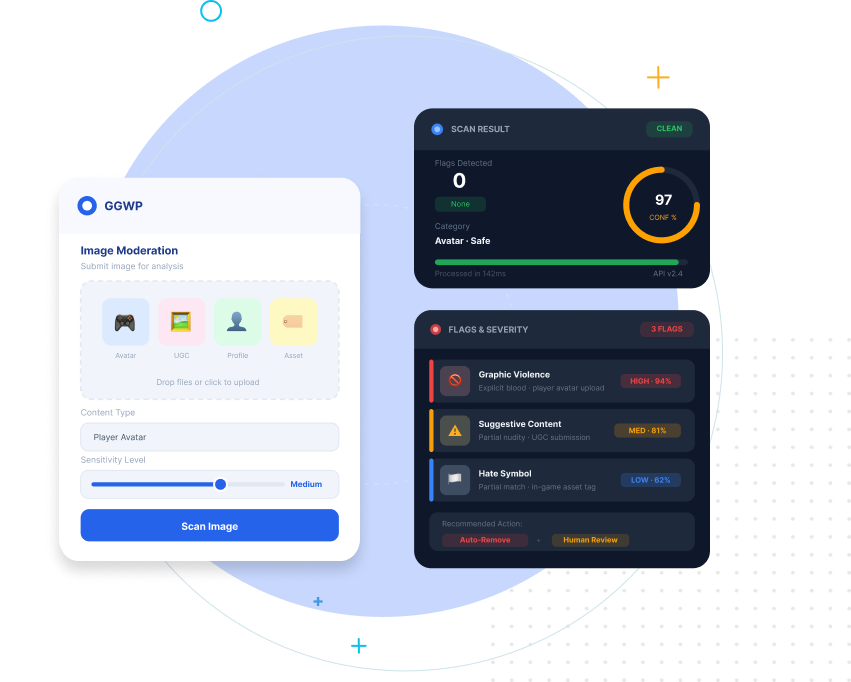

AI Image Moderation

GGWP’s Image Moderation API enables you to automatically detect and classify harmful or policy-violating visual content across your platform. Built for games and interactive communities, it processes user-submitted images such as avatars, profile photos, UGC uploads, and in-game assets, returning structured flags, severity levels, and confidence scores so your team can act quickly and consistently.

CONTROL

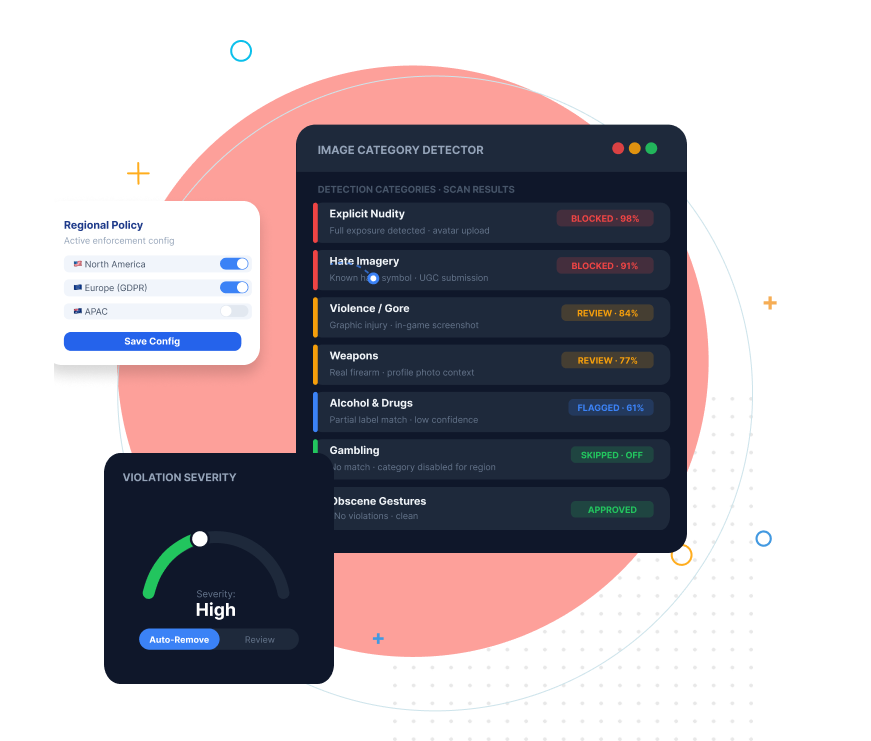

Configurable Visual Safety Detection

The system supports detection across a wide range of categories including explicit and non-explicit nudity, hate imagery, violence, gore, weapons, alcohol and drugs, gambling, and obscene gestures. Each category can be enabled or disabled at onboarding or configured per API call, allowing you to align enforcement with your platform policies and regional requirements.

ENFORCEMENT

Integrated Mod Workflows

Image moderation integrates directly into your existing GGWP workflow. Every request is tied to a user ID, allowing detected incidents to influence reputation scoring and appear in your moderation dashboard alongside contextual gameplay data. With configurable enforcement and optional Automod integration, you can move from detection to action without adding operational overhead.