03 Dec

Online communities, multiplayer games, and user-generated content (UGC) platforms all share one common goal: keeping conversations safe and respectful. As more users participate, so does the risk of toxic behavior, spam, and harmful content slipping through. This makes moderation services a major part of how companies protect their users and support healthy engagement.

Moderation services are not all the same. Some focus on real-time detection and analysis, others on policy enforcement, while others are built to handle specific types of content, like text, voice or images and video. For companies running digital platforms, it’s important to understand the different categories of moderation services, what they do, and how they impact both community health and the business.

Quick Takeaways

- Moderation services protect users and brand reputation by filtering harmful or disruptive content.

- Different moderation services specialize in handling voice, chat, images, video, sentiment analysis and policy enforcement.

- Real-time moderation is especially important in multiplayer gaming, live chat environments and any fast-pacing, high volume UGC community.

- Policy-aligned enforcement creates consistency and transparency for communities.

- Companies that can use multiple moderation services together, especially within the same toolset, like GGWP, get more accurate results and stronger community trust.

1. Text Moderation

Text moderation is one of the most widely used services (because chat/text is still the most popular form of communication across digital platforms). Moderation tools, like GGWP’s Community Copilot, scan and filter content to flag harmful language, spam, and harassment in community forums, in-game chat, and messaging apps.

AI Advancements

Modern text moderation has come a long way, and now goes far beyond basic keyword filters. Context matters, and platforms need to distinguish between a casual phrase like “you’re trash” among friends and a targeted insult intended to harm another user. Using AI/LLM models that are designed specifically for your type of community is key here.

Protection from Harmful Conversations

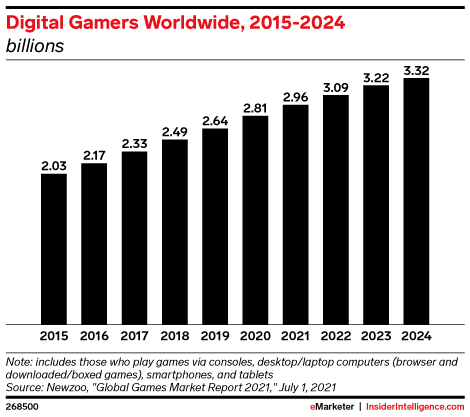

For user-generated content platforms, like forums or comments sections, text moderation makes sure discussions stay constructive and free of harmful content that could damage the platform’s reputation. For multiplayer games, text moderation keeps chat channels healthy and prevents toxic players from driving others away. With a reported total of 3.32 billion gamers worldwide in 2024, there’s a lot to lose if your moderation is slacking.

2. Voice Moderation

Voice moderation has become increasingly important with the growth of multiplayer games, voice chat platforms, and streaming communities. Unlike text, voice comes with a new set of issues – conversations move fast, slang evolves quickly, and harmful language can have an immediate impact on your platform.

Detect Harmful Behavior Instantly

Voice moderation tools use real-time detection to flag slurs, harassment, and much more. These tools can categorize issues by severity, change a player’s reputation based on a flag, apply automated responses like muting, or escalate serious incidents to human moderators for review.

Separating Banter from Harassment

Without intelligent voice moderation like GGWP, companies risk normalizing toxic behavior under the excuse that “trash talk is part of the culture.” This has extensive revenue impacts, as players who experience abuse are more likely to leave, reducing engagement and lifetime value. By investing in voice moderation services, your company protects both players and the business.

3. Image And Video Moderation

User-generated content platforms rely heavily on images and video, which makes moderation in these formats extra important. Offensive or harmful visual content can spread quickly and create lasting reputational damage.

Protection from Explicit Content

Image and video moderation services can automatically detect explicit content, violent imagery, and other policy violations before they ever appear in feeds or galleries. Some systems can also scan for hidden or manipulated content that could bypass simple filters.

Keep Your Brand Safe

For companies hosting creative communities, recipe sites, or fan forums, image and video moderation keeps the platform safe while still allowing users to express themselves. The speed and accuracy of this type of moderation help platforms avoid harmful incidents that could alienate users or attract negative publicity.

4. Automated Policy Enforcement

The final term key to harnessing effective moderation services is automated policy enforcement. Detection of infractions alone won’t cut it – companies need systems that align moderation decisions with their community standards and enforcement policies and to automatically take action on them when possible..

Policy-based moderation services allow companies to define what behaviors are acceptable, what actions should be taken at each severity level, and how appeals or disputes are handled. By making enforcement transparent and consistent, these services help build trust with users who want to know that rules are applied fairly. Once these policy points are defined and reliably detected, you can automate and alleviate resources for more escalated tasks.

For example, policy-aligned moderation might include:

- Instant bans for threats or hate speech.

- Temporary mutes for harassment or targeted insults.

- Warnings for spam or disruptive behavior.

- Transparent appeals processes to ensure fairness.

- Congratulations messages to users upon cooperative activities.

This type of structured moderation keeps voice, text, and user-generated content consistent, and helps companies demonstrate that they are serious about maintaining safe and respectful communities.

5. User Reputation Scores

Each moderation service serves its own purpose, which is why you’re better off combining them. Text, voice, image, and video moderation and real-time sentiment analysis all provide different perspectives, while automated policy enforcement prioritizes speed and fairness. Factoring all of these data points together into a user reputation score can give you a holistic view of each of your users.

More Inputs = Higher Precision

By using multiple moderation services together, companies create a more reliable view of user behavior on which to potentially automate actions. A text message alone may not reveal intent, but when combined with voice chat or user reports, it paints a much clearer picture. This multi-layered approach helps platforms make better decisions, automate fair sanctions, and provide stronger protection for their communities.

For user-generated content platforms, it means safer spaces that encourage ongoing participation. For gaming companies, this means less players leaving due to negative environments. In both cases, excellent moderation services protect brand reputation and drive better results in the long-run.

6. Real-Time Sentiment Analysis

Real-time sentiment analysis provides companies with unprecedented business intelligence they can act on. As a part of GGWP’s mission to become an end-to-end Community Copilot, our Pulse sentiment analysis tool turns previously unseen and unheard in-game conversations into actionable insights, community growth, and revenue generation.

How does Real-time Sentiment Analysis Work?

GGWP’s Pulse analyzes anonymized text and voice chat data in real-time to give developers more accurate sentiment analysis and deeper insights into what their players truly think about their games. These insights lead directly to product iteration and can drive real, measurable ROI.

What are the Benefits?

Using these real-time insights, brands are able to access unfiltered, real-time player sentiment, spot emerging trends and improve player engagement. Player feedback is essential to customer support, product, and marketing teams – and this allows you to get that feedback even quicker.

Building Stronger Communities With Layered Moderation

Moderation services don’t only stop harmful content – they protect users, support fair engagement, and build communities that people actually want to stay in. From text and voice to sentiment analysis and policy enforcement, each service has a role to play in creating safe and thriving digital spaces.

Companies that invest in the right combination of moderation services gain more than just safer platforms. They earn the trust of their users, get better retention, and encourage stronger growth.

GGWP’s moderation services are built to help companies manage risk, protect users, and support healthier community engagement. If you’re ready to improve your platform with voice, chat, and real-time moderation tools that align with your vision and policies, contact us today to learn how we can help!